Are your RESTful services ready to handle a surge in traffic? One way to find out....

Do you know if your RESTful services will take the heat when your service/website gains multi-fold momentum in traffic?

Dave works for an eCommerce startup, one day, he was given a task to find out if the micro-services which he has been writing is scalable and optimized. The marketing team wants to run a big flash sale for a couple of days and expects a massive influx of online traffic to the website and the mobile app.

Dave's task is to figure out how services like order, checkout and other product-related services would perform in such situations.

In this article, we will load-test three REST API frameworks written in NodeJS, Python & GoLang and, the benchmark will be using the Apache Benchmark tool.

The beauty of micro-services is that different teams could write services backed by business logic in whichever programming language & Eco-system conducive to their use case.

Our Rigg -

- 1.2Ghz Quad-core Cortex A53

- whopping 1GB RAM

- 16GB Class 10 SD Card.

Well, you could run this benchmark on any compatible system. Benchmarks make sense as long as you test them on the same hardware environment.

Environment -

Server machine [mighty rpi3] -

- OS - Raspberry PI OS on armv7l

- Kernel - 5.10.52-v7+

- Application runtimes -

Client machine [my laptop ;-)]-

- OS - Fedora 34

- Kernel - 5.13.5-200.fc34.x86_64

- Ryzen 5500U (6 Cores & 8 GB DDR4 3200 MHz RAM)

- Apache Benchmark tool (AB tool)

And the test -

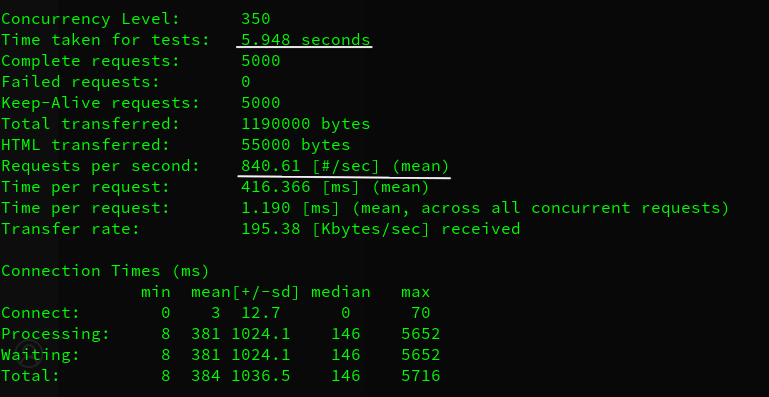

ab -k -c 350 -n 5000 http://<rpi ip>:3000/

The above command sends 5k requests in total with a concurrency of 350 requests. One could tweak these numbers as per requirements.

The entire testing session ran for a few minutes and, we have the results in the below screenshots. We will start with FastAPI, followed by ExpressJS and then Fiber.

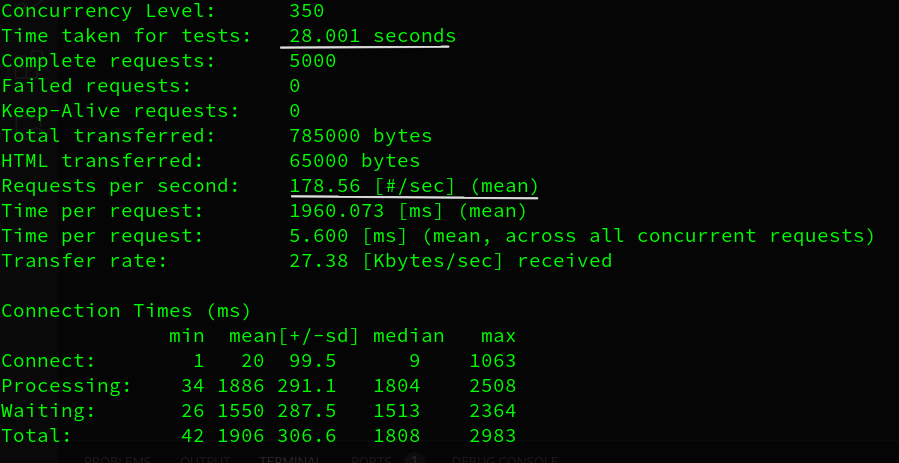

FastAPI (Sync) - Python

FastAPI in synchronous mode clocks in at ~178 requests per second.

FastAPI (Async) - Python

FastAPI in asynchronous mode clocks in at ~228 requests per second. One important thing to point out, that we are running FastAPI's ASGI server (uvicorn) with default settings (1 worker). If we tweak the worker count to the number of available CPUs, there should be a significant jump in the performance.

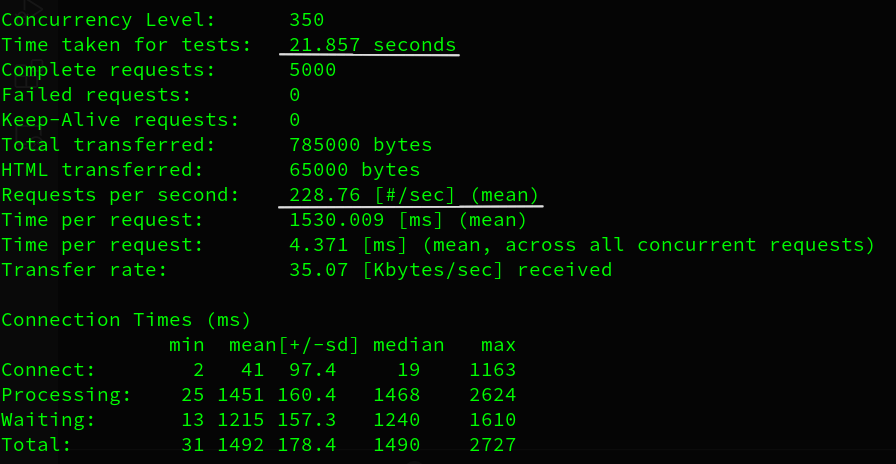

Express JS (Sync) - NodeJS

Express JS in the synchronous mode does 447 requests per second which are over ~2x jump from the FastAPI.

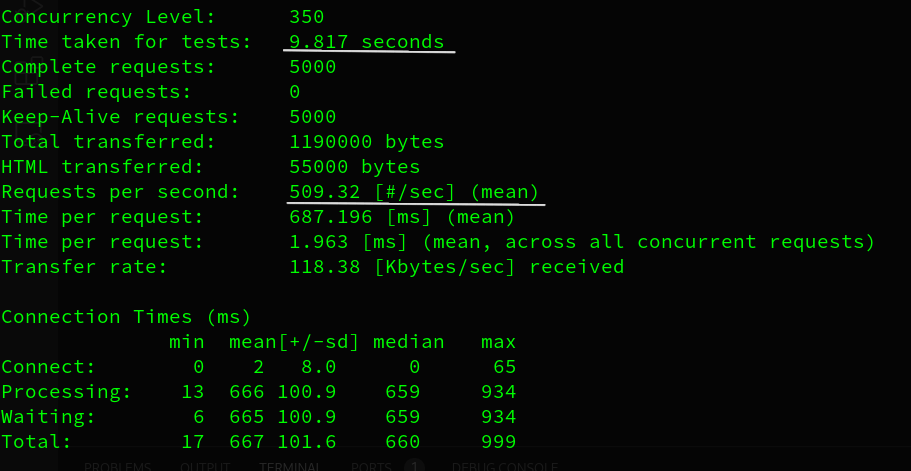

Express JS (Async) - NodeJS

A similar trend is apparent in asynchronous mode, ~509 requests per second. Again, we must note that FastAPI and Express JS apps are running on one worker by default. In both frameworks, we see a single-core performance.

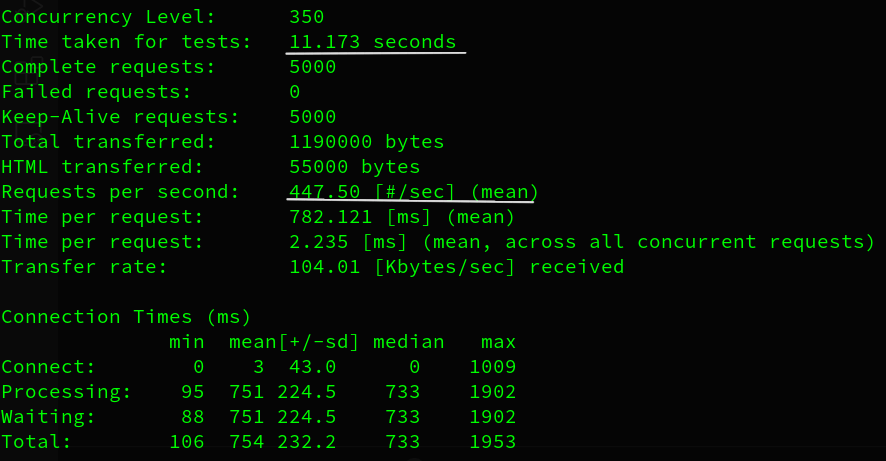

Now comes our star performer - Fiber (Golang)

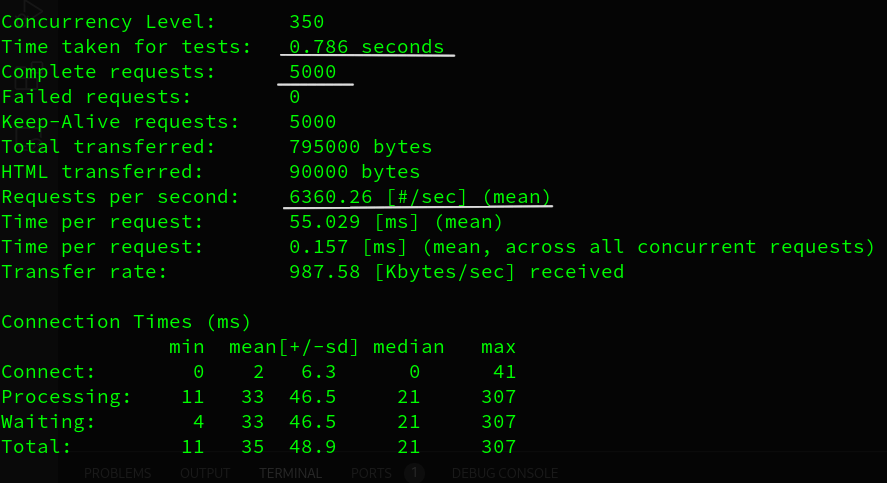

Fiber - 5k requests

Fiber takes less than a second to process 5k requests! Since Fiber framework is implemented in Golang, concurrency is handled out of the box which means there is no need to specify async await syntax in your code.

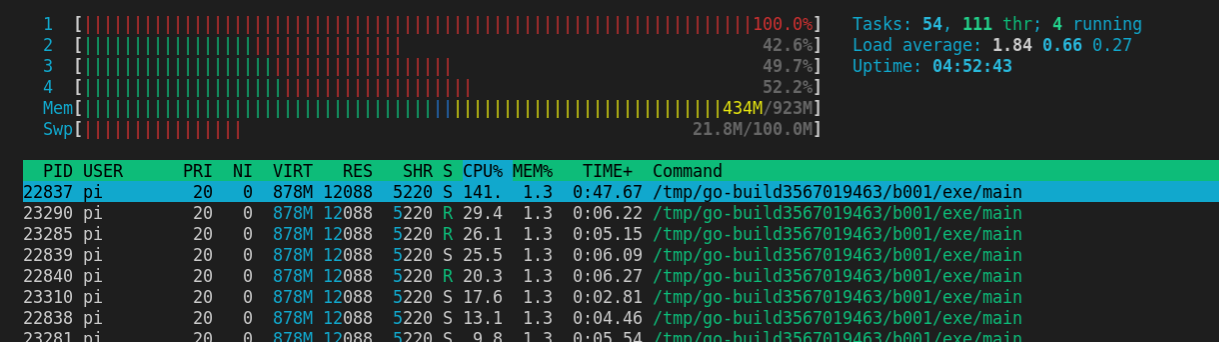

CPU utilization by Fiber (Golang)

Now, you may be wondering Golang is using all CPU cores, but FastAPI and ExpressJS are utilizing only one CPU core per instance. Let's see how they perform when running on all CPU cores.

FastAPI Async with 4 workers on 4 Cores

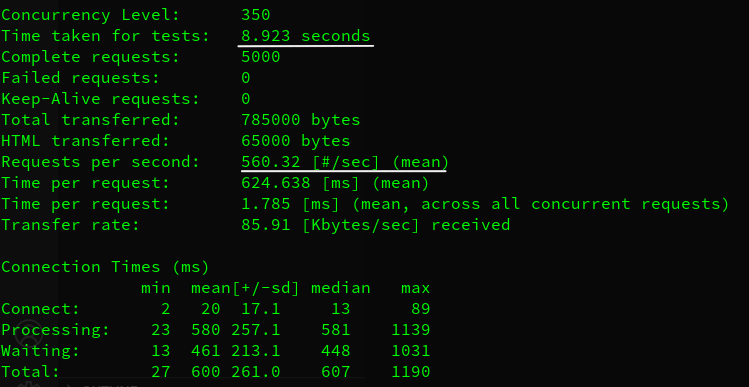

ExpressJS Async with 4 workers on 4 Cores

ExpressJS is 1.5x faster than FastAPI. However, Fiber is still ~7.5 and ~11 times faster than ExpressJS and FastAPI.

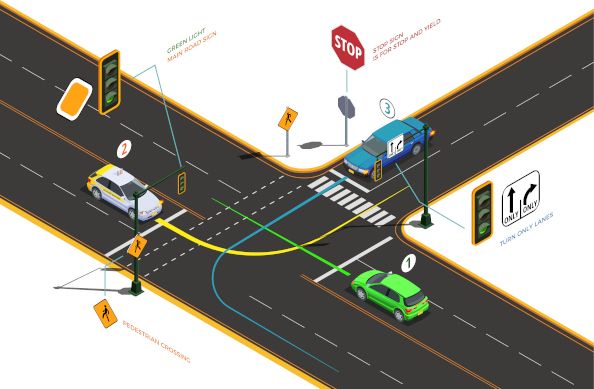

What do Sync and Async mean?

Dave is also a champion chess player and, he has been challenged to play chess with not one but ten different players all at once! He has essentially two approaches

#Approach 1- Dave decides to play one player at a time and, if the average time per game is 10minutes (assuming other players are making quick decisions like our champ!), even then it takes 10 mins X 10 players = at least 100 minutes (i.e. over 1.6 hours)

#Approach 2 - Dave plays all players at once! How is that possible? Every time an opponent takes time to think about their next move, Dave moves on to the next player and the next. It continues till the last move of the last remaining game.

Now swap the crazy madness of a guy playing chess with multiple people with a RESTful service. The opponents are external DB or service/APIs with which the RESTful service will communicate. Yes, that's how Sync & Async works and, since we are dealing with computers and not humans, they always perform actions as instructed!

Our thoughts -

Well, it is going to be a long one and yet may not be complete. Let's get to it, shall we?

Fiber (Golang) is way ahead of the game in terms of speed and raw performance. Express (NodeJS) is ~1.5x faster than FastAPI (Python) and, these frameworks are ~7.5 and ~11.35 times slower than Fiber (as per multi-core async performance).

However, this doesn't mean that you no longer use Python or NodeJS based frameworks. Factors like existing software stack, dev skills & experience in the team will play a significant role in making such decisions. Remember, good Go devs are hard to find compared to Python & JavaScript devs (at the time of writing this article) simply because these languages and their ecosystem have been here for longer.

A few salient features FastAPI brings like Automatic data model documentation, JSON validations, serialization and more. One could achieve the same results in the other two frameworks by writing more code as they are minimalistic by design.

We have used FastAPI extensively. Absolute performance becomes relevant when you are doing things at scale. These benchmarks are solely in terms of raw speed/throughput and do not consider development speed, DB I/O operations, JSON serialization and de-serialization etc.

A general recommendation is that you choose FastAPI when working with AI/ML-based services or, your team knows Python well. And same goes for NodeJS based framework decisions. There is no silver bullet here!

If you are starting a new project and you are willing to give GoLang a try. Fiber is a great place to start and, there are many frameworks to choose from.

We hope this gave you some perspective. Feel free to share comments and feedback. Let us know if we should do another post comparing full-feature frameworks from NodeJS & Golang eco-system such as NestJS & Go-buffalo.

Source code -

If you want to follow further development on this project, make sure you give the repo a star! :-)

Join the conversation